Image contributed by Jon Peddie; Source: NVIDIA

AI brought higher resolution and frame rate with beautiful images.

Since the introduction of the PC, fast ray tracing has been a dream. J. Turner Whitted’s fast recursive ray-tracing algorithm inspired the dream[i], and it’s been something of a search for the holy grail thereafter.[ii]

At SIGGRAPH 2018, two companies demonstrated real-time ray tracing: Adshir (now part of Snap) on mobile devices[iii] and NVIDIA on PCs and workstations.[iv]

The demos were impressive but not quite all that was hoped for. NVIDIA’s offering initially was for workstations only, not gaming, and the resolution was limited by frames per second (FPS) — increase one, reduce the other. By February 2019, NVIDIA introduced a solution that would offer fast (>30 FPS) spatial image upscaling that requires specific training for each game.

NVIDIA introduced Volta in December 2017 — the first microarchitecture with tensor cores, specifically engineered to offer improved deep learning performance over traditional CUDA cores/shaders. The company cleverly applied those AI cores to improve ray-tracing performance, calling the technique Deep Learning Super Sampling (DLSS).

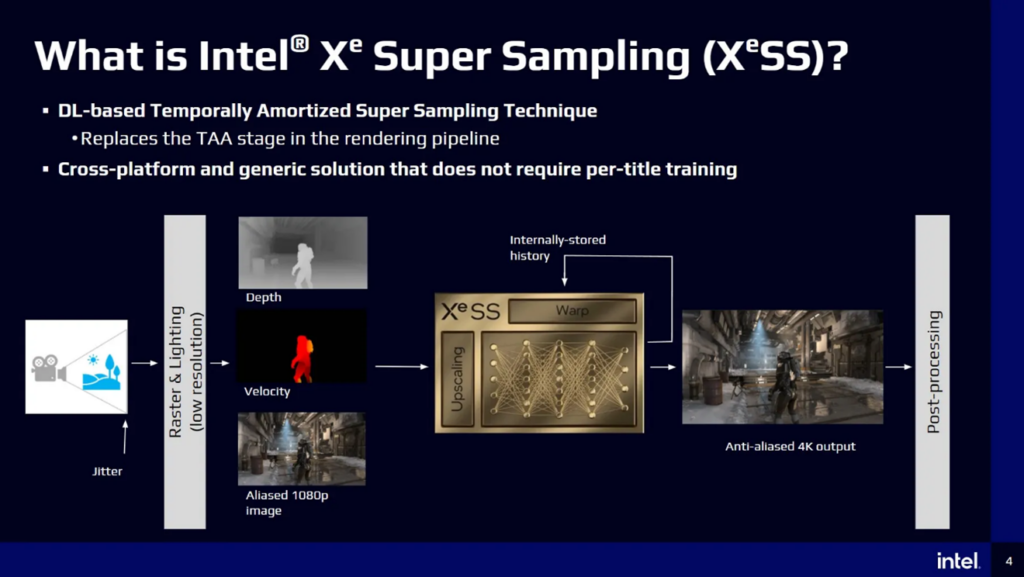

Intel introduced its Xe Super Sampling (XeSS) as an upscaling feature of Intel Arc Alchemist graphics boards in 2022.[v]

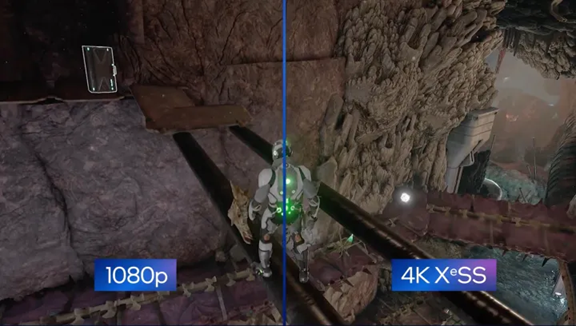

Figure 1. Better resolution and ray tracing with Intel’s XeSS. (Source: Intel)

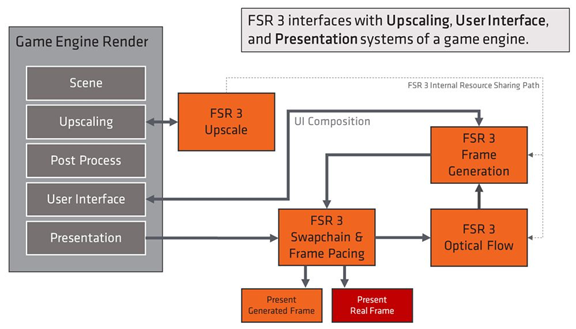

AMD didn’t employ AI for upscaling in its FidelityFX Super Resolution (FSR) 3 until September 2023.[vi]

The basic concept of DLSS and its competitors is to analyze sequential frames and motion data to create additional high-quality frames. DLSS can sample a frame at a lower resolution, use that sample to construct a higher-resolution frame, and generate additional frames. The result is a faster frame rate with high-quality ray tracing.

Figure 2. FSR 3 uses AMD’s Fluid Motion Frames (AFMF) optical flow technology and temporal game data, such as motion vectors, to generate additional high-quality frames for a higher frame rate in supported games. (Source: AMD)

The heart of the process is based on super resolution (SR), the core image upscaling technique combined with frame generation (FG), which doubles frame rates by generating alternate frames using AI. The AI engine or tensor cores (and shaders) would basically look at Frame 1 and create a family of vectors, and then use those vectors to create a new Frame 2. The technique is clever in concept but relies on sufficient tensor cores (matrix multipliers) and high-speed operation, which, in turn, requires high-speed local memory (the frame buffer).

In 2020, the technology was added to many newly released games and game engines such as Unreal Engine[vii] and Unity.[viii] Still, some fine-tuning was required to get the image quality and smoothness.

Figure 3. Intel’s XeSS AI ray-tracing logic flow. (Source: Intel)

The early DLSS, XeSS, and FSR versions offered a super-resolution capability but little else. In the fall of 2022, NVIDIA introduced DLSS 3, adding FG that analyzed sequential frames to create additional frames for better smoothness. This was a real AI enhancement. But still, it was not total ray tracing. With frame rates as high as 100 FPS, the AI-created interframes were not noticeable, and if they lacked any details or had any image errors, they flashed by so fast that the eye’s persistence smoothed them out.

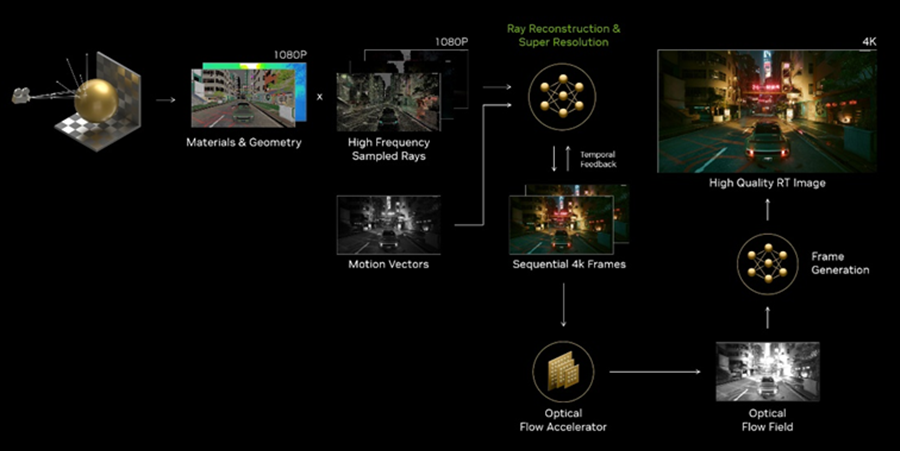

The real breakthrough for NVIDIA came in August 2023 with the introduction of DLSS 3.5 and the addition of global illumination with ray reconstruction (RR). This replaced multiple denoisers with an enhancement to NVIDIA’s existing DLSS 3. DLSS 3.5 could reach an FPS of 108, with all ray-tracing features turned on, and deliver high-resolution images in real time.

Figure 4. DLSS 3.5 replaced hand-tuned denoisers with a supercomputer-trained AI network that generates higher-quality pixels in between sampled rays. (Source: NVIDIA)

However, NVIDIA’s DLSS was not backward compatible and was proprietary. AMD sought to make its FSR device independent and largely succeeded, although the reasons for such altruism were difficult to understand. Intel chose a similar approach. However, not all games can support global illumination as an option or do so by lowering resolution.

NVIDIA introduced its tensor-core AI engine in a GPU over a year before it added intersect engines for ray tracing, which the company calls RT cores. All three GPU makers incorporate a bounding volume hierarchy (BVH) cache to reduce the average latency of fetching BVH data and can process multiple rays for higher efficiency. Those techniques were well understood. Adding AI analysis and intermediate frame generation raised performance and display resolution and brought the full spectrum of ray tracing, including global illumination, to the GPU.

This article was authored by Jon Peddie of Jon Peddie Research.

[i] Whitted T. (1979) An Improved Illumination Model for Shaded Display. Proceedings of the 6th annual conference on Computer graphics and interactive techniques

[ii] Peddie, Jon. Ray Tracing: A Tool for All, Springer Link (2019). https://www.amazon.com/Ray-Tracing-Tool-Jon-Peddie/dp/3030174891

[iii] Peddie, Jon. Real-time ray tracing shown by Adshir at Siggraph, TechWatch (August 5, 2019), https://www.jonpeddie.com/techwatch/realtime-ray-tracing-shown-by-adshir-at-siggraph/

[iv] Herrera, Alex. Nvidia revealed the Turing GPU architecture, TechWatch (October 19, 2018), https://www.jonpeddie.com/techwatch/the-tale-of-turing/

[v] Roach, Jacob. What is Intel XeSS, and how does it compare to Nvidia DLSS, (October 5, 2022), https://www.digitaltrends.com/computing/what-is-intel-xess/

[vi] Blake-Davies, Alexander. AMD FSR 3 Now Available (September 29, 2023) https://community.amd.com/t5/gaming/amd-fsr-3-now-available/ba-p/634265

[vii] Nvidia DLSS Plugin and Reflex Now Available for Unreal Engine. Nvidia Developer Blog. 2021-02-11. Retrieved 2022-02-07.

[viii] Nvidia DLSS Natively Supported in Unity 2021.2. Nvidia Developer Blog. 2021-04-14. Retrieved 2022-02-07.