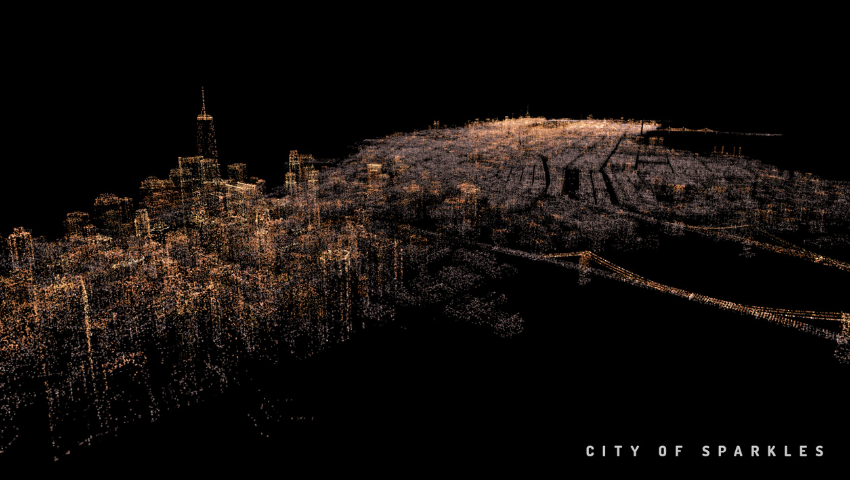

“City of Sparkles” © 2019 Amber Garage

At SIGGRAPH 2019, one of the experiences in the Immersive Pavilion will transport you from Los Angeles to New York City and San Francisco. That experience is “City of Sparkles.” “City of Sparkles” uses Twitter to map city-dwellers’ emotions and awaken familiar feelings within audience members, culminating in a unique augmented and virtual reality project.

We had the opportunity to talk with Yang Liu, representing his fellow creators Botao Hu and Ran Duan, about how “City of Sparkles” came to life, its use of AR and VR, and what the team hopes SIGGRAPH 2019 attendees take away from the experience

SIGGRAPH: Loneliness is a theme in “City of Sparkles.” What about the experience evokes loneliness? How have participants responded?

Yang Liu (YL): First, the theme comes together with the basic setup of the experience. The feeling of loneliness kicks off the moment you put on the VR headset. Suddenly, you’re isolated from the physical world in terms of both vision and sound, and you enter a different world… on your own. The contrast of the player being small compared to the super-large space presented in the experience strengthens the feeling of loneliness.

Second, the visuals and sound work together to trigger something deep in [a participant’s] memory that is typically connected to loneliness. For example, the red light in the dark sky flickers and breathes the same way as aircraft warning lights on skyscrapers, which I saw whenever I felt lonely and tried to see stars from a big, crowded city. The slight, sparse piano hits remind me of those midnights I squeezed in my small room trying to fall asleep. Many [participants] comment that the setup of the scene recalled a familiar reminder of when they have felt lonely, especially those people who have lived in New York City or San Francisco.

Lastly, it was those curated tweets, present with the emotion curve, guided by the visuals and sound, that clearly makes a statement on the theme.

SIGGRAPH: Describe how you used Natural Language Processing (NLP) to analyze sentiment for this project.

YL: We mainly used the sentiment analysis library provided by Natural Language Toolkit (NLTK) and ran a Naïve Bayes classifier, trained from NLTK corpora. We had the classifier analyze the main content of each tweet with URLs, names, hashtags, and places ignored. [We] then got a score from -1 to 1, where -1 means most negative, 0 means neutral, and 1 means most positive. We also tried some NLP services from cloud computing providers, hoping they would have better results due to a larger amount of training data, but it turned out to be too expensive.

SIGGRAPH: How did you curate the content for “City of Sparkles”? Did you discover tweets that matched the desired sentiment, or did you look at certain hashtags/feeds/discussions? Were any explicitly solicited from specific users?

YL: We started by narrowing down tweets posted by New York City and San Francisco residents, as they are the ones whose stories we wanted to present in the virtual cities. There are two collections of tweets presented in “City of Sparkles.” One collection is random tweets that are purely picked by programs, which will appear in the actual location where it was posted as the audience flies around in the virtual city, or randomly appear if no geo-data is provided. We filtered out tweets with neutral sentiment to make the world more emotional. The other collection consists of tweets we manually curated. We filtered tweets by keywords like “lonely,” “isolated,” or “deserted,” and then tagged them with “love,” “city,” “deep,” “positive,” etc. Those tweets present as red lights in the experience to guide players and make the theme clear.

An interesting fact is that we tried to only use tweets that had a geolocation so that we could place them in the exact location they happened. We gave up that idea, though, because we found that tweets with geolocation tended to be boring. People turn on geolocation mostly when they want to show off nice food or a good trip, and rarely when they want to share emotions.

SIGGRAPH: You mention in this video that “City of Sparkles” was originally virtual reality that migrated to augmented reality. Expand on how you transitioned the project to AR.

YL: We migrated the project to Magic Leap as an experiment on how AR could potentially help tell the story and to go deeper into the question of whether AR and VR are actually mandatory to convey the theme.

The majority of the work was optimizing the whole rendering pipeline so that it can run on a mobile device, given it was originally designed for a desktop computer. We optimized and simplified the shaders we used to compute particle motions, changed the pipeline to use GPU instancing, and managed to render millions of particles on Magic Leap.

Another part of the work was to redesign and reimplement the controlling and navigation system, as it does not make sense to “fly” in AR, since you’re still in the physical world and you cannot fly. So, we had to change the navigation from flying around the city to manipulating a city sandbox.

SIGGRAPH: What are the advantages of presenting “City of Sparkles” in AR? VR?

YL: The feeling of loneliness in this experience heavily depends on the feeling of being small while staying in a large, empty space. VR helps emphasize such contrast by conveying the sense of depth, which maximizes the parallax effect while the player is flying slowly. The parallax with depth tells the flying speed, and from the speed the player gets an immediate feeling of how large the city is. Such immediate, direct spatial sense is not likely to be delivered by a normal 2D display. In addition, a VR headset naturally isolates the audience from the outside world and helps them feel the inside, which is very important given “City of Sparkles” is designed as a VR installation in public space. Also, we leveraged the virtual hands provided by Oculus Touch controllers and tried to give the audience a feeling of touching souls when they touch the red lights, which is hard to simulate by a mouse or gamepad.

Our AR experiments tell us that, in general, AR is not an ideal medium for presenting “City of Sparkles,” mainly because we lose the advantage of isolating the audience as well as the feeling of flying. However, when we make the AR actual city scale and overlap the particles in the real city, it’s a whole different story. It feels so different when you see tweets popping up in the physical world talking about the exact location you’re looking at. We might go further on this direction, but that would be a different work with a different theme.

SIGGRAPH: What challenges did you encounter when creating “City of Sparkles,” both for AR and VR?

YL: The biggest challenge for us is to change our way of thinking. As engineers, we’re well trained in thinking from bottom to top: “This technology looks cool, what can I do with it?” To make a piece of art typically needs to be top-down thinking: “I want to say something, what medium and technology can help me convey that?” We managed to give “City of Sparkles” a clear theme of loneliness by learning from a lot of times where we were trapped in the details and forgot the “big picture,” and by forcing ourselves to make every element in this work stick to that theme and convey that message.

Second, the visual design itself is a challenge for us. We cannot claim ourselves as visual art experts by any means. Therefore, to make the art look good without natural intuition, we had to try tons of different colors and shapes. So, we built tools that allowed us to rapidly iterate ideas. For example, we could adjust the curve of some color samples in Photoshop and see the color changes of the virtual city simultaneously, as well as adjust its noise and randomness.

Also, we made a lot of efforts on sound design. We wanted to have music that strengthened the emotion. But, in order to be consistent with the abstract visual style, we didn’t want it to be realistic or sound familiar to any physical instruments. So, we composed synthesized music and even wrote a plugin to iterate sound with virtual synthesizers.

Lastly, rendering and animating 3 million particles is a technical challenge. We built the experience in Unity but had to implement our own particle system that was specialized and, thus, highly optimized for our use case. Later on, we even had to make it possible to run on a mobile device.

SIGGRAPH: What do you hope SIGGRAPH 2019 attendees take away from “City of Sparkles”?

YL: The experience in the Immersive Pavilion will be VR. We hope attendees enjoy a nice virtual trip to New York City and San Francisco that is different from your familiar ones, and also enjoy the process of exploring and discovering the tweets and weaving stories of each city’s residents.

The SIGGRAPH 2019 Immersive Pavilion program is open to participants with an Experiences badge and above. Preview all installations and click here to register for the conference, 28 July–1 August, in Los Angeles.

As a 3D interactive engineer and new media artist, Yang Liu is exploring the paradigm and possibilities of immersive interactions in VR, AR, and future video games. He works at thatgamecompany and previously worked at Oculus. His past work includes tools to plan drone trajectory in VR/AR, immersive experience of walking in a city, and taking pictures and sound tools.

As a 3D interactive engineer and new media artist, Yang Liu is exploring the paradigm and possibilities of immersive interactions in VR, AR, and future video games. He works at thatgamecompany and previously worked at Oculus. His past work includes tools to plan drone trajectory in VR/AR, immersive experience of walking in a city, and taking pictures and sound tools.

Botao Hu is the founder of Amber Garage, a Silicon Valley-based creative art and tech studio. He is a future reality computing creative/inventor, new media art producer, and robotics expert. He specialized in robotics algorithm (localization and mapping), augmented reality, real-time computer graphics, and robotic cinematography. He received a M.S. degree in computer science from Stanford University and B.S. degree in computer science from Tsinghua University. Before founding Amber Garage, he worked at Google, Microsoft, HKUST, Pinterest, Twitter, and DJI.

Botao Hu is the founder of Amber Garage, a Silicon Valley-based creative art and tech studio. He is a future reality computing creative/inventor, new media art producer, and robotics expert. He specialized in robotics algorithm (localization and mapping), augmented reality, real-time computer graphics, and robotic cinematography. He received a M.S. degree in computer science from Stanford University and B.S. degree in computer science from Tsinghua University. Before founding Amber Garage, he worked at Google, Microsoft, HKUST, Pinterest, Twitter, and DJI.

As a composer and pianist, Ran Duan (Dr. RD) got his double major at Oberlin College, studying composition with Lewis Nielson and piano with Lydia Frumkin. His piece Four Falsehoods (2008) was selected for the album Aural Capacity No.5, the collaborative CD recording of Oberlin Conservatory student works, also selected for the performance in the Kennedy Center in Washington, D.C. He partnered with leading software instruments provider 8Dio for creating showcase soundtracks, and was the winner of 8Dio Stand Out composing contest.

As a composer and pianist, Ran Duan (Dr. RD) got his double major at Oberlin College, studying composition with Lewis Nielson and piano with Lydia Frumkin. His piece Four Falsehoods (2008) was selected for the album Aural Capacity No.5, the collaborative CD recording of Oberlin Conservatory student works, also selected for the performance in the Kennedy Center in Washington, D.C. He partnered with leading software instruments provider 8Dio for creating showcase soundtracks, and was the winner of 8Dio Stand Out composing contest.