Copyright: Victor Zappi and Sidney Fels, 2017-2022

The Hyper Drumhead is a novel digital musical instrument that allows for the visualization and manipulation of 2D sound waves in real time. On Wednesday, 10 August at SIGGRAPH 2022, the project will be featured as a Real Time Live! demonstration. Its main developer, Victor Zappi, is ready to showcase how the instrument can be used to intuitively create and control sounds. The presentation will conclude with a short rock/electronic piece. In the interview below, Victor talks about the inspiration for the Hyper Drumhead, his team’s successful collaboration, the supporting roles of both tradition and innovation in digital music, how he’s preparing for the big finale, and more:

SIGGRAPH: It’s one thing to hear about a transformative use of computer graphics and technology; it’s even cooler to physically hear and see that innovation right in front of you. What inspired you to create a new digital musical instrument called “The Hyper Drumhead”?

Victor Zappi (VZ): The origin of the Hyper Drumhead was quite unexpected! Its design stems from a larger research project from a few years ago, focusing on vocal synthesis. At that time, Prof. Sidney Fels and I were exploring novel physical models capable of simulating in real time how air waves propagate in the human vocal tract — a big challenge in the context of physics-based vocal synthesis. We teamed up with Dr. Andrew Allen, and together we designed a piece of software capable of leveraging the rendering pipeline to accelerate acoustic models of realistic vocal tract geometries.

As the main developer of this novel audio/visual system, I spent countless hours testing all its features, by means of using and misusing it. To my surprise, this exploration unearthed a hidden musical potential extending way beyond vocal synthesis. Sidney, Andrew, and I are all experts in novel musical instrument design, so we agreed to create a new version of the system geared toward music making. It took several years and required the refactoring of the physical model and the design of a custom hardware interface, but we are extremely satisfied with the result!

SIGGRAPH: Technical details aside for a moment, the Hyper Drumhead essentially can be enjoyed by anyone who can draw shapes and has a sense of musical exploration. The level of possible sounds and control feels limitless. Was that kind of accessibility part of your vision? If so, why?

VZ: Yes, since the beginning it was my intention to design an instrument that could be fun to explore. And I believe that the feeling of accessibility you mention derives from the fact that the Hyper Drumhead can be considered as both an instrument and a meta-instrument. Each combination of shape, material, and type of excitation leads to different sounds that in turn call for idiosyncratic interaction techniques. This may seem daunting at first. The instrument is not easy to play, because it is not always possible to predict the effect of a modification of the model, nor it is easy to decide what to modify to target a specific sound. However, the Hyper Drumhead also can be approached as a blank slate, a place to create shapes and materials from scratch and make them emit sound. This process allows the musician to create virtual instruments and understand their acoustic behavior, thanks to both sonic and visual feedback. That’s why it is a meta-instrument, too. And there is no right or wrong here, only exploration.

SIGGRAPH: You’ve pointed out recently that the design of new digital musical instruments is based in tradition, not just innovation. How do those seemingly opposite forces combine in this project?

VZ: Thanks for asking because this is an important point! The way we make music with the Hyper Drumhead is quite original. There are no keys or notes, and the excitation of the instrument can vary much more than other designs — traditional and even digital ones. However, its fundamental interaction paradigm was designed to share a lot with successful instruments we all know.

First of all, sound is “immediate.” When we press a key on a piano or pluck a string on a violin, we make a sound, even if we have no training on the instrument whatsoever. Likewise, on the Hyper Drumhead, there is no need to enter menus or select options to output a simple sound. It happens as soon as we touch its surface. And much like the case of the piano or the violin, the experience doesn’t stop there! There are intermediate, advanced and even extended techniques that can be leveraged to play a large array of sounds, but require practice and long-term commitment. Finally, some of the skills acquired on traditional musical instruments facilitate this learning process and make the Hyper Drumhead even more accessible. Examples are the ability to play rhythms on percussion instruments and the bimanual finger dexterity developed on keyboards or aerophones.

In technical terms, these design features are called “low-entry fee,” “high ceiling,” and “skill transfer.” There is a large amount of literature that discusses how they impacted the development of some of the most successful traditional instruments ever, and how important they are for designs driven by digital innovation.

SIGGRAPH: Can you share the gist of how the main components of the Hyper Drumhead work together to enable users to create and control novel sounds?

VZ: The instrument is composed of three main components. The first one is a computer that crunches all the math and takes care of inputs and sound output. It runs Linux and is equipped with a gaming graphics card and an external audio interface. The good news is that there is no need to interact with said computer when playing the Hyper Drumhead! It remains “under the hood.” Then, the main bulk of the instrument is a retro-projected glass surface that works both as display and as an input device. It tracks up to 100 touch points, so that one or more musicians can draw shapes, change acoustic parameters, and excite the instrument. I’d say that we can consider this surface the “actual” instrument, at least from a performative standpoint. The third and last component is a MIDI foot pedal. It can be used to change the behavior of each finger touch on the fly (for example, switching from excitation to drawing) and allows for a variety of more advanced actions like the creation and the recall of presets.

SIGGRAPH: This project will be featured as a Real-Time Live! demonstration at SIGGRAPH 2022. What are you looking forward to most about the event?

VZ: I am extremely excited to see the other demonstrations! Maybe it is not surprising at this point, but above all I hope to find inspiration for novel musical instrument designs. I think that transformative technologies cross domains and applications!

SIGGRAPH: You plan to conclude the demo by performing a short rock/electronic piece. Are you practicing for that, or will it be more like an unrehearsed jam?

VZ: The piece is scripted but leaves a little bit of space for improvisation. I have been rehearsing a lot with Prof. Mike Frengel over the last two months. Luckily, I know the Hyper Drumhead very well at this point, so I feel confident! Mike will play the guitar instead. The output of his guitar will be used to excite some of the shapes drawn on the Hyper Drumhead. It’s the first time I will play a piece with this setup!

Real Time Live! at SIGGRAPH 2022 showcases an electrifying sample of the latest and most advanced interactive projects in real-time. Participants both in Vancouver and watching from home will feel the excitement of real-time rendering! Register here for the event. Now through 11 August, save $30 on new registrations using the code SIGGRAPHSAVINGS.

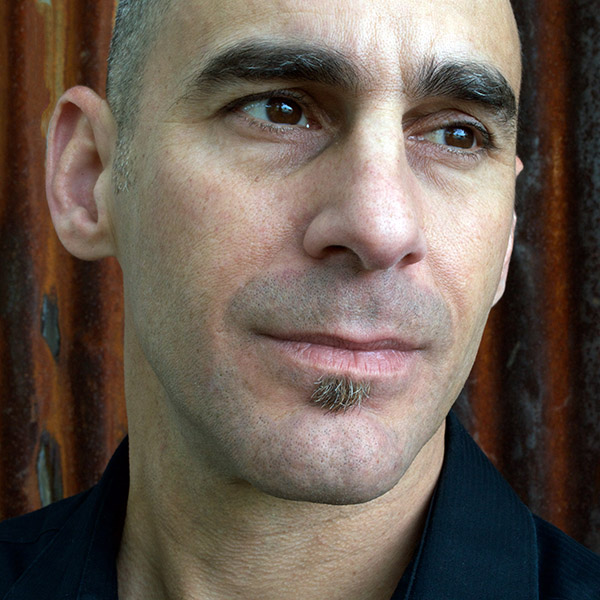

Victor Zappi is an assistant professor of music technology at Northeastern University. Being both an engineer and a musician, he focuses on the design and use of new interfaces for musical expression: How can we use today’s most advanced technologies to build novel musical instruments? In what ways can these instruments comply with and engage the physical and cognitive abilities of performers as well as audience? And what new forms of musical training and practices are required to master them? Victor’s research interests span virtual and augmented reality, physical modeling synthesis, music perception and cognition, and music pedagogy.

Mike Frengel is a composer, performer, researcher, software developer, and educator. He had the great fortune to study with Jon Appleton, Charles Dodge, Larry Polansky, Denis Smalley, Allen Strange, and Christian Wolff. His works have received international recognition and have been included on the Sonic Circuits VII, ICMC’95, CDCM Vol.26, 2000 Luigi Russolo and ICMC 2009 compact discs, and are performed at music events around the world. His book, “The Unorthodox Guitar: A Guide to Alternative Performance Practice,” is available through Oxford University Press. His latest compact disc, “Music for Guitar and Electronics,” is available through Ravello Records. Mike is currently on the faculty of the music department at Northeastern University, where he teaches courses in music technology. He is also founder of Boom Audio Technologies, LLC, a software company devoted to bringing high-quality audio software to market. The company’s first product is Soundbug, an audio editor for MacOS.

Sid Fels is a Distinguished University Scholar at the University of British Columbia. He was a visiting researcher at ATR Media Integration & Communications Research Laboratories in Kyoto, Japan, and previously had worked at Virtual Technologies Inc. in CA, USA. He is internationally known for his work in human/computer interaction, biomechanical modeling, neural networks, new interfaces for musical expression, and interactive arts, with more than 400 scholarly publications. Sid received his doctorate and master’s degree in computer science at the University of Toronto, and his bachelor’s of science degree in electrical engineering at the University of Waterloo.

Andrew (Drew) Allen is a spatial audio researcher from Walterboro, SC, USA. Prior to research, he was a classically trained musician and composer. Most recently, he was a primary contributor to Resonance Audio by Google and currently develops new spatial DSP algorithms for Microsoft’s Project Acoustics.