Photo credit: Gökhan Tugay Şeker

Amplifying the voices of sexual assault survivors, “Cacophonic Choir” — both a SIGGRAPH 2020 Art Papers and Art Gallery selection — uses machine learning through carefully spaced agents to demonstrate the effect of the mass media on survivors’ stories. We caught up with creators Hannah Wolfe, Şölen Kıratlı, and Alex Bundy to learn more about their inspiration, algorithms, and audio.

SIGGRAPH: Talk a bit about the process for developing both the physical installation and the accompanying Art Paper, “Cacophonic Choir.” What inspired you?

Cacophonic Choir Team: As survivors of sexual assault, we found the surge in media coverage of the #MeToo movement overwhelming. We wanted to express the feeling of being inundated by these stories.

To develop the machine-learning aspect of the work, we needed access to stories of sexual assault for training data. We searched for an online forum, looking for a place where people can share their experiences with sexual assault. That’s when we found the When You’re Ready Project, which became the source of our training data.

SIGGRAPH: How many people were involved? How long did it take? What was your biggest challenge?

Cacophonic Choir Team: The project was a collaboration between the three of us — Hannah, Alex, and myself. The first version of the project took about seven months (February 2019 to September 2019) from concept to exhibiting at Contemporary Istanbul. We met on a weekly basis, sharing prototypes, designing the system, and integrating our components.

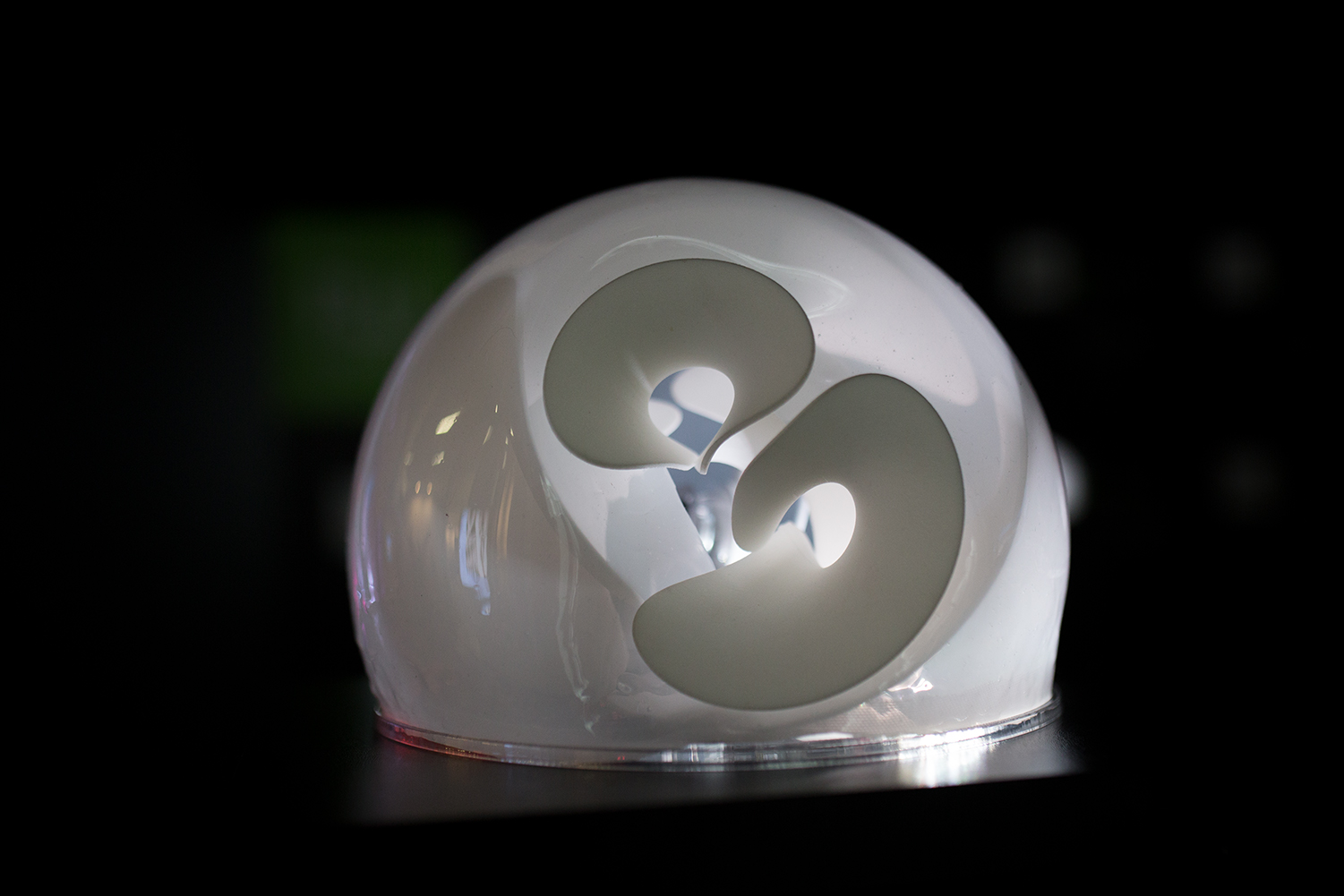

One of the biggest challenges we ran into was with computational power for real-time computation on a Raspberry Pi. Another major challenge was embedding the protruding 3D-printed object in a translucent sphere and designing it in a way that could be easily transported for an international exhibition.

SIGGRAPH: Let’s get technical. How did you use Long Short Term Memory (LSTM) recurrent neural network models in collaboration with the When You’re Ready Project?

Cacophonic Choir Team: The stories that the agents tell are manipulated using a generative algorithm, with the degree of manipulation being based on the visitor’s proximity to the agent. To generate text that reflected different levels of semantic clarity, we used the textgenrnn library to create Long Short Term Memory (LSTM) recurrent neural network models, which were trained on over 500 stories of experiences of sexual assault from the When You’re Ready Project website.

To generate text at different levels of semantic clarity, the neural network model was trained to 199 epochs and saved at different levels of training along the way. The original implementation of the system chose the next word in real time, with the program loading the required trained model, and generating a word based on the previously played words. The program seeded the request with fewer previous words at further distances and more words at closer distances.

While this ran in real time on a laptop, the process was too slow on a Raspberry Pi. So for the final installation, we chose 65 stories with 1,000–1,500 words and pre-generated 1,500 words at each level of clarity, using models trained for longer with a greater number of words from the original text as a seed for the closer distances. Each time an agent wakes up, it chooses a story at random. Then, it chooses the next word from text that we had pre-generated based on the proximity sensor.

SIGGRAPH: Why did you settle on granular audio processing?

Cacophonic Choir Team: Initially we were inspired by the gibberish that Google’s WaveNet creates when no words are given and the effect of training time on the resulting speech. So, for the initial design, we made a human voice sound more robotic from a distance, but it was difficult to make a robotic-sounding voice gradually transition to a more natural-sounding voice.

We experimented with other forms of audio processing, including distortion and frequency-domain filtering. We also experimented with filtering to make the voices seem more distant and muffled when users were far from the sculptures, but we determined that such processing was unnecessary because of the natural filtering effects of distance, as well as the “cacophonic” effect of all nine agents speaking at once.

We settled on granular processing to create a stuttering effect. With granular processing, the transition between unprocessed and fully processed audio felt more gradual. Grain sizes are random, but in general are relatively large (between 0.13 and 1 second) with very little overlap, as grain re-triggering occurs between 2 to 15 times per second.

We chose these parameters to create an effect where some phonemes in the sound files are repeated or skipped. Whether any grain is skipped or repeated is determined randomly, with the odds of a skip or repeat increasing as the viewer’s distance from the work is increased. The amount of processing and degree of randomization of the granular parameters are calculated throughout the playback of the words, so that the amount of processing may change mid-word.

SIGGRAPH: What emotions and thoughts do you hope to evoke from both readers of the research and those who view/experience the project?

Cacophonic Choir Team: The stories of sexual assault survivors are the core of the work. Naturally, these stories are likely to evoke different emotions in different people. We find all of the stories to be unpleasant, but important to listen to and reflect on. The intention was that the sculptural forms and the sonic interactions would draw people in.

The intimacy of having to approach and lean in toward the sculptures also gives an impression of closeness and involvement that merely reading or listening to the stories alone does not convey. We hope that the interactive audio element leads people to reflect on the mediated nature of these stories when published in digital media — what is lost and what is gained when sensitive stories like these are shared on social media platforms?

Those reading the paper may not have the visceral experience they would have of seeing the piece in person, but they should develop similar feelings and ideas when reflecting on the work. Those reading about the piece who have their own artistic and technical practices may also find some inspiration for their own work.

SIGGRAPH: What’s next for “Cacophonic Choir”? How do you plan to continue your work of changing the narrative?

Cacophonic Choir Team: Currently we are exploring a digital version of “Cacophonic Choir”, which we will implement for the virtual exhibition at SIGGRAPH 2020. A virtual environment removes aspects of the experience that physical presence provides, but also creates different affordances, like the accuracy of measuring proximity and potentially increasing the numbers of agents.

This type of work and prior works, like lauren woods’ “American Monument” about black lives and police brutality, are important for future works aimed at uplifting under-represented voices.

SIGGRAPH: What you’re most looking forward to in participating in your first SIGGRAPH conference!

Cacophonic Choir Team: Since this will be our first exhibition at SIGGRAPH, we are excited for the opportunity to share our work and make connections.

SIGGRAPH: Your work also received quite the feature in Leonardo, being both an Art Gallery and Art Papers submission! What was your experience working with the publication?

Cacophonic Choir Team: We are thrilled to have both the artwork and paper accepted. Working on the publication was a natural process since it was primarily about the artwork. We had very clear ideas about what we wanted to write about from the get-go. Once we started writing, we actually ended up with more content than fit within the length requirement! While this made the paper more concise, we definitely have much more to say.

SIGGRAPH: What advice do you have for someone looking to submit to Art Gallery or Art Papers for a future SIGGRAPH conference?

Cacophonic Choir Team: When writing, try to be as precise as you can in describing your work and your intentions behind it. Also, make sure to describe the connection of the work to a larger and critical discourse as best as you can. While the concept behind a work is important, it also needs to be documented in a professional manner, including a high quality, polished video.

Acceptance from Art Gallery submissions are dependent on a variety of conditions, such as the curator’s [and jury’s] vision of the exhibition or the venue’s spatial requirements. The latter [can be] particularly restrictive for larger art installations.

Hannah Wolfe is an assistant professor of Computer Science at Colby College. Her artwork focuses on the relationship between body and technology, giving computers and robots biological qualities. Her work has been shown at ISEA, NIME, CHI, and Contemporary Istanbul, as well as published in IEEE Transactions in Affective Computing. She earned a Ph.D. in Media Arts and Technology and an M.S. in Computer Science from the University of California, Santa Barbara. Her research spans human robot interaction, affective computing, computational creativity, and media arts.

Şölen Kıratlı is an artist, architect, researcher, and lecturer. Her work is interdisciplinary in nature and lies at the intersection of sound, interactive media, and digital design and fabrication. Her work has been exhibited at SIGGRAPH Asia, CURRENTS New Media, Contemporary Istanbul, and NIME (New Interfaces for Musical Expression), amongst other places. She is currently a Ph.D. candidate at the Media Arts and Technology Program at the University of California, Santa Barbara, where she focuses on digital media practice and research. She holds a B.Sc. in architecture and an M.Arch.

Alex Bundy is a sound artist, musician, software developer, and philosopher. His most recent work involves interactive sound design and the creation of sound-based environments for meditation. He was a member of the experimental rock band Yume Bitsu, and has been recording and performing as Planetarium Music and under his own name for 20 years. He earned his Ph.D. in philosophy from the University of California, Santa Barbara, and his philosophical work focuses on epistemic issues surrounding disagreement.