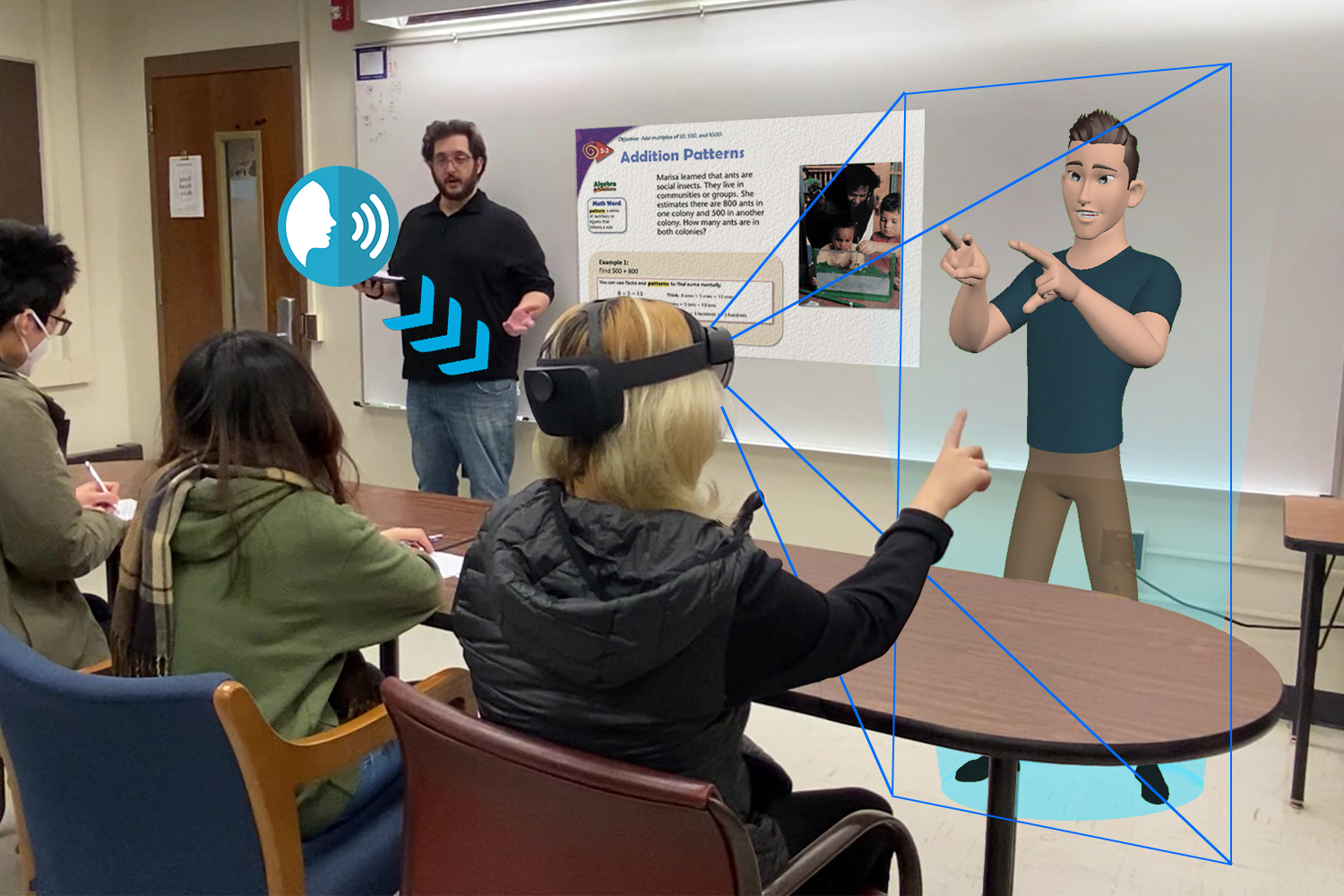

© A DHH student is observing and interacting with the virtual character interpreter in a classroom setting through HoloLens.

A team from Purdue University harmonizes innovation and technology to create a new learning system for deaf and hard-of-hearing (DHH) students. In “Holographic Sign Language Interpreters,” The signing avatars, observed through wearable mixed reality smart glasses (Microsoft HoloLens), translate speech to Signed Exact English (SEE) in real time. SIGGRAPH caught up with the team behind the assistive technology project to learn more about its development and how it contributes to the conversation around accessibility in the classroom.

SIGGRAPH: Share some background about your SIGGRAPH 2022 Educator’s Forum Talk “Holographic Sign Language Interpreters.” What inspired it?

Fu-Chia Yang (FY): Our research lab has been working on digital sign language avatars for years. In the past, we explored different wearable XR devices through building applications and incorporating sign language pedagogical agents for deaf/hard-of-hearing students. With the introduction of HoloLens 2 and the enhancement of developer APIs, we decided to bring “Holographic Sign Language Interpreters” into our research scope.

Christos Mousas (CM): We built a mixed reality (MR) application for DHH students. Our system detects audio input and displays the corresponding sign animation in near real time. The overall goal of our research is to improve DHH college students’ accessibility to educational materials and DHH children’s learning of math concepts through the application of holographic wearable MR.

SIGGRAPH: Tell us about the development of “Holographic Sign Language Interpreters.” Did you face any challenges in its creation?

FY: The most challenging part was coming up with the speech-to-sign integration for the prototype with a limited sign language dataset and testing out compatible APIs for the HoloLens device. Our team plans to work on enhancing this part of the application by implementing machine learning and natural language processing technique, which is the ultimate best approach for speech-to-sign integration.

CM: The implementation pipeline of our projects consists of two main steps. First, we collected four K–1 math lessons through a motion capture session. A professional deaf signer was recruited to sign in Signed Exact English (SEE) for K–1 math lectures. We utilized motion capture technology to capture SEE animation for the holographic avatar. Second, we designed the MR application in Unity game engine, utilizing the Azure Speech-to-Text SDK. The system takes input speech (audio) from the instructor and converts it to English text. The converted sentences are then analyzed to identify the corresponding signs, prosodic markers, and prosodic modifiers. The system then triggers the sign animation segments in the dataset and renders a lifelike holographic sign language interpreter who signs in SEE.

SIGGRAPH: How did you develop the avatars?

FY: The avatar was originally created by Salas (2020). We purchased the advanced skeleton rig and re-rigged it to fit into our motion capture pipeline. The avatar animation was then captured through a full body, face, and hand tracking system simultaneously by recording a professional signer.

SIGGRAPH: How does this system contribute to greater accessibility and adaptability in the classroom?

FY: Utilizing digital sign language avatars can benefit DHH students through several perspectives. Human interpreters can often be costly and not always available, especially when it comes to higher education, whereas digital sign language interpreters can provide higher accessibility to the end users. Moreover, course materials can be retrieved and reviewed post-classes and digital agents can be catered to users’ preferences, providing higher adaptability to the DHH community. Similar applications can be used outside of the classroom as well, including daily communication and entertainment activities.

SIGGRAPH: How do you hope this system will be used moving forward? What do you want users to take away from it?

FY: The purpose of creating this system is not to replace human interpreters but to provide more options and alternatives to DHH individuals. Multiple aspects of the system still need refinements and adjustments to better fit end users’ requirements and expectations. We hope to keep improving the system and be able to test it in real classroom scenarios.

CM: The holographic avatars can be used by deaf learners in the classroom, at home, and while interacting with digital educational materials. However, there are multiple other areas where holographic avatar interpreters could benefit DHH people, such as in the market or other public places. We want users to think that technological advances could ease and improve our daily lives.

SIGGRAPH: What can SIGGRAPH 2022 participants expect when they learn more about “Holographic Sign Language Interpreters” during the conference?

FY: When it comes to assistive technology, human factors and input are essential. We built this prototype utilizing several cutting-edge technologies, and the main goal was simply bringing out the best experience for DHH students in class.

CM: We are planning to present the developed pipeline of our application. We hope participants will benefit by understanding current technological limitations and potential future directions. After all, synthesizing holographic sign language interpreters is still a challenge that researchers from various fields should work together to achieve better results.

SIGGRAPH: SIGGRAPH is excited to host its first-ever hybrid conference. What are you most looking forward to about the experience?

FY: I look forward to meeting contributors online and streaming Talks from people attending the conference in person.

CM: We experienced hybrid conferences before (e.g., ACM CHI 2022). We know there are a lot of challenges when organizing such a hybrid event. However, we are really looking forward to the social events.

Calling all educators! On Monday, 8 August from 9:30 am–4:45 pm, gather together at SIGGRAPH 2022 for Educator’s Day. This special event welcomes a fantastic lineup of trainers including Epic, Autodesk, Adobe, and ILM. Educator’s Day content will touch on the topics of visual programming, gamifying learning, shaping the future of animation, and so much more. Educator’s Day is available to registrants at the Full Conference/Full Conference Supporter, Virtual Conference/Virtual Conference Supporter, Experience Plus, and Experience levels. Register now and add Educator’s Day to your agenda.

Fu-Chia Yang is a M.S. student at Purdue University majoring in computer graphics technology. She graduated from The Chinese University of Hong Kong with a B.S. degree in computer science. Her research interests include virtual reality, augmented reality, character animation, and human-computer interaction.

Christos Mousas is an assistant professor at Purdue University and director of the Virtual Reality Lab. His research revolves around virtual reality, virtual humans, computer animation, applied perception in computer graphics, and immersive interaction. From 2015 to 2016, he was a postdoctoral researcher at the Department of Computer Science at Dartmouth College. He holds a Ph.D. in informatics and an M.Sc. in multimedia applications and virtual environments both from the School of Engineering and Informatics of the University of Sussex, and an integrated master’s degree in audiovisual science and art from Ionian University. He is a member of ACM and IEEE and has been a member of the organizing and program committees of many conferences in the virtual reality, computer graphics/animation, and human-computer interaction fields.

Nicoletta Adamo is a professor of computer graphics technology and Purdue University Faculty Scholar. She is an award-winning animator and graphic designer and creator of several 2D and 3D animations that aired on national television. Her area of expertise is in character animation and character design, and her research interests focus on the application of 3D animation technology to education, human computer communication (HCC), and visualization. She is co-founder and director of the IDEA Laboratory.