Image credit/copyright: CC BY-NC-SA

One of the hottest seats at the conference, SIGGRAPH 2019 Real-Time Live! was a sight to behold. (If you missed our recap, click here!) As the show wrapped, there was one thing left to do: Vote on the night’s best demo. The judges and audience members deliberated, ultimately selecting “GauGAN: Semantic Image Synthesis With Spatially Adaptive Normalization” as the most impressive showstopper. We caught up with one of the team members behind the project to learn more.

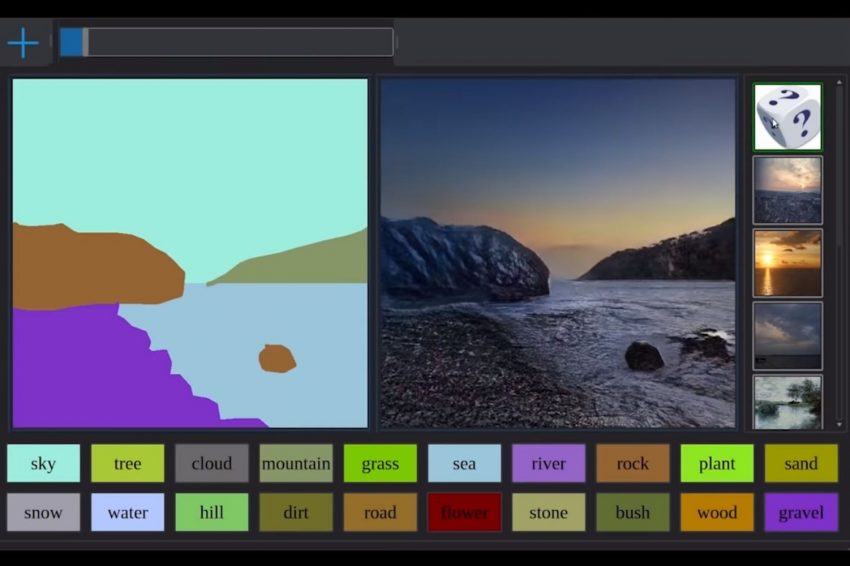

SIGGRAPH: Your Real-Time Live! demonstration blew the audience and judges away in Los Angeles, taking both the Best in Show and Audience Choice honors (the latter, a brand-new award). Tell us how the project came to be and the team that is involved.

Ming-Yu Liu (MYL): As researchers working on semantic image synthesis, the ultimate goal is harnessing the power of AI for converting human interpretable semantic representations to photorealistic images. The starting point of our project is very simple. We just want to improve our previous generation image synthesis algorithm, pix2pixHD. After achieving the project goal, we use the GauGAN app to demonstrate Bounce House For Sale how much better the new algorithm performs. What we did not expect is that many people find this research outcome, in its preliminary form, already useful. We feel so encouraged and thankful that our demo was favored by both the audience and judges. The GauGAN team consists of four deep learning researchers, one software guru, and one artist.

SIGGRAPH: In the video below, two members of your team demo GauGAN. At a high level, what is unique about GauGAN in terms of real-time technology?

MYL: GauGAN is unique in the sense that it is the first deep learning-based method for converting users’ doodles to beautiful landscape images in a convincing and real-time fashion. It is the result of new deep learning methods deployed on NVIDIA’s cutting-edge tensor core, deep learning accelerators.

SIGGRAPH: Walk us through the development process. How long did it take from ideation to final demonstration? What was the biggest challenge you encountered?

MYL: NVIDIA Research has been working on utilizing AI for generating images since 2017. Our team developed an algorithm called pix2pixHD, which is the foundation of the GauGAN work. Around November 2018, we had a prototype system that could synthesize images in 256×256 resolution. Then, around February 2019, we further improved the system so that it could synthesize images in 512×512 resolutions or higher. One of our researchers took our hacky research code and made it run in super real-time.

Regarding the major challenge, I would say it was in the algorithm design. We identified the main issues in converting segmentation masks to photorealistic images using GANs in the early stages of the project; however, it took quite some effort to find a solution that could both solve the problem and allow real-time performance.

SIGGRAPH: Put simply, what excites you about this work? How do you see GauGAN being used by creators of the future?

MYL: GauGAN represents NVIDIA’s effort in harnessing AI for content creation. We are so delighted to see that, even in its preliminary form, artists have found GauGAN useful for boosting productivity. We see that, in the current form, GauGAN is useful for concept artists to quickly iterate their design. We also see GauGAN as a recreation tool for people to express their creativity, lower their stress, and live a happier life.

SIGGRAPH: The theme for SIGGRAPH 2019 was “thrive.” As innovators, can you each name one thing that inspires you to thrive?

MYL: To me, creating truly creative machines for the social good has been my life mission. It inspires me to thrive.

SIGGRAPH: We’re taking things to D.C. come next summer. Can readers expect to see a new demo from your team in 2020? Any other future plans?

MYL: We are working on several extensions of GauGAN ranging from improving its temporal stability to incorporating 3D modeling. We hope that we can succeed in these endeavors and contribute a demo to SIGGRAPH 2020’s Real-Time Live! in D.C.

Ming-Yu Liu is a principal research scientist at NVIDIA Research. Before joining NVIDIA in 2016, he was a principal research scientist at Mitsubishi Electric Research Labs (MERL), and had earned his Ph.D. from the Department of Electrical and Computer Engineering at the University of Maryland College Park in 2012. He was the recipient of a R&D 100 Award by R&D Magazine for his robotic bin picking system in 2014. His semantic image synthesis paper and scene understanding paper are in the best paper finalist in the 2019 CVPR and 2015 RSS conferences, respectively. His research focus is on generative image modeling, with a goal to enable machines human-like imagination capability.